We’ve funded a range of projects to guide our roadmap for the future

Tokenised Authentication for Research Computing Services (TARCS)

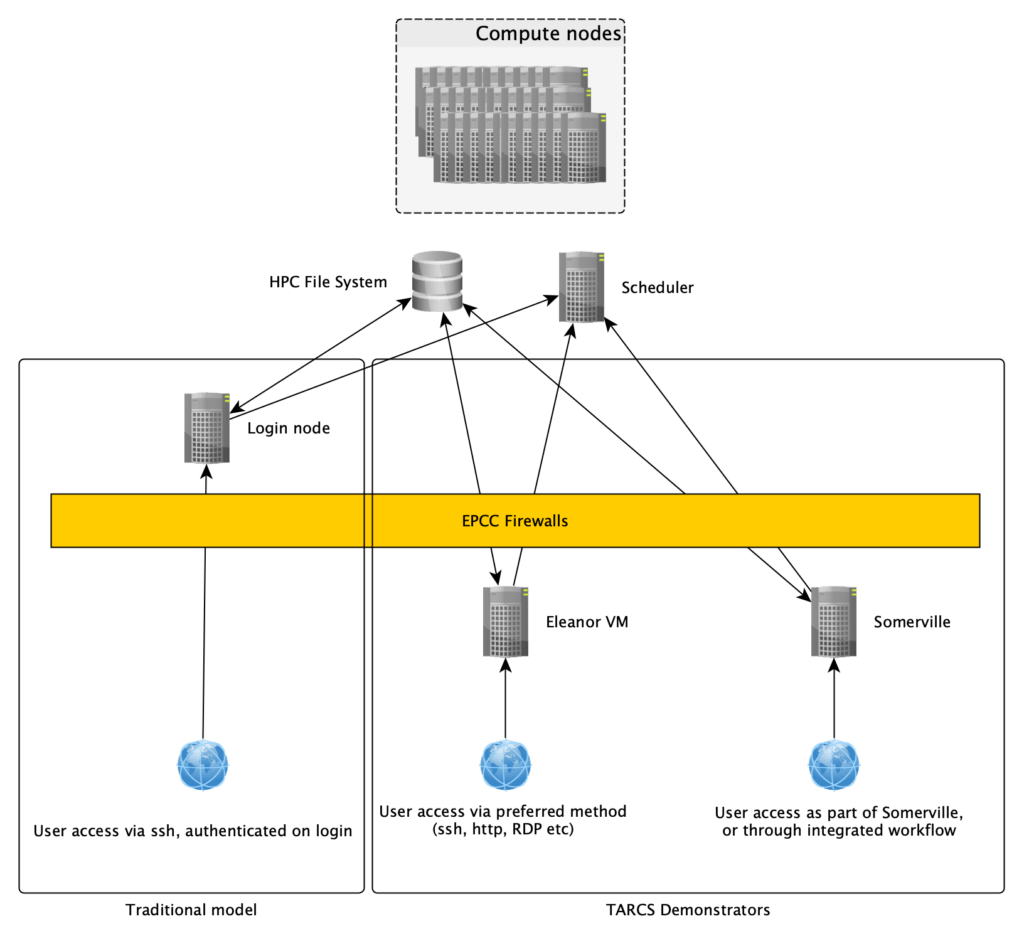

HPC and other batch scheduled research computing services have historically been accessed via dedicated login nodes. These are specialised servers accessed typically via ssh and operating in a command line (text based) environment, and providing users with access to the resources of the service – namely the file systems used to store data and that batch scheduler which schedules work onto the compute cluster itself.

It is not typically possible to directly access resources such as the scheduler or file system externally, for example from a different research computing service or a cloud instance. In this project we aim to demonstrate making these resources directly externally accessible, in a secure manner, using tokenised authentication.

One of the limitations of traditional login nodes is that in order to maintain service security users cannot be allowed root privileges, preventing them from making certain customisations to their environment. Making these services available externally, for example to a user managed VM, would allow users to deploy a variety of research enablement which are not possible without root privileges, or which are not appropriate for a shared login node. These services include:

- Continuous Integration and Continuous Development servers supporting research software development

- Worfklow management servers allowing user groups to integrated external worfklows with the internal batch scheduler

- Licence servers for research software

In this project we will demonstrate and assess the use of tokenised authentication to integrate the scheduler and file system services of the HPC service Cirrus with a VM in the University of Edinburgh Eleanor service, as well as with the IRIS Somerville cloud platform. A variety of workflows will be explored, and this approach will be assessed from both a user and technical perspective.

Lead organisation

EPCC, The University of Edinburgh

Principal investigator

Kieran Leach